Rethinking a century of fluid flows

July 1, 2021

In 1922, English meteorologist Lewis Fry Richardson published Weather Prediction by Numerical Analysis. This influential work included a few pages devoted to a phenomenological model that described the way that multiple fluids (gases and liquids) flow through a porous-medium system and how the model could be used in predicting weather patterns.

Since then, researchers have continued to build on and expand Richardson’s model. Its principles have been used to pipe petroleum effectively, design environmental engineering solutions, and conduct hydrology and soil science.

Dr. Cass Miller

Dr. William Gray

Cass Miller, PhD, Okun Distinguished Professor, and William Gray, PhD, retired professor, both work in the Department of Environmental Sciences and Engineering at the UNC Gillings School of Global Public Health. Together, they are working to develop a more complete and accurate method of fluid flow modeling.

Through a United States Department of Energy (DOE) INCITE award, Miller and his team have been granted access to the IBM AC922 Summit supercomputer at the Oak Ridge Leadership Computing Facility, a DOE Office of Science User Facility located at Oak Ridge National Laboratory. The sheer power of the 200-petaflop machine means Miller can approach the subject of two-fluid flows (mixtures of liquids or gases) in a way that would have been inconceivable in Richardson’s time.

Breaking tradition

Miller’s work focuses on the way scientists model two-fluid flows through porous media (rocks or wood, for example). Numerous factors influence how fluids move through porous media, but not all computational approaches consider these factors. In general, it’s the basic phenomena that affect the transport of these fluids — such as the transfer of mass and momentum — that are well-understood by researchers and can be calculated accurately.

“If you look at a porous-media system at a smaller scale,” Miller said, “like a continuum scale where, for example, a point exists entirely within one fluid phase or within a solid phase, we understand transport phenomena on that scale relatively well — we call that the microscale. Unfortunately, we can’t solve very many problems at the microscale. As soon as you start thinking about where the solid particles are and where each fluid is, it becomes computationally and pragmatically overwhelming to describe a system at that scale.”

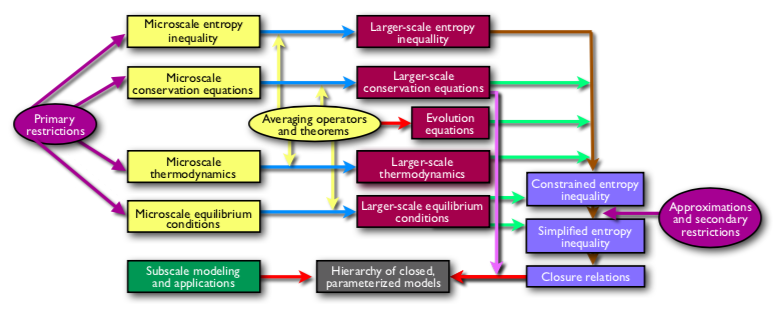

This is the TCAT framework for model building, closure, evaluation and validation.

To resolve this scale issue, researchers traditionally have approached most practical fluid flow problems at the macroscale, a scale at which computation becomes more feasible. Because numerous real-world applications require answers to multiple fluid flow problems, scientists have had to sacrifice certain details in their models for the purpose of accessible solutions. Further, Richardson’s phenomenological model was written down with no formal derivation at the larger scale, meaning that fundamental microscale physics is not represented explicitly in traditional macroscale models.

In Richardson’s day, these omissions were sensible. Without modern computational methods, linking microscale physics to a large-scale model was a nearly unthinkable task. But now, with help from Summit — the fastest supercomputer in the world for open science — Miller and his team are bridging the divide between the microscale and macroscale. To do so, they have developed an approach known as Thermodynamically Constrained Averaging Theory (TCAT).

“The idea of TCAT is to overcome these limitations,” Miller said. “Can we somehow start from physics that are well or better understood and get to models that describe the physics for the systems we’re interested in at the macroscale?”

The TCAT approach

To solve problems that are of interest to society, Miller’s team needed to find a way to translate these first principles into large-scale mathematical models.

“The idea behind the TCAT model is that we start from the microscale,” Miller said, “We take that smaller-scale physics, which includes thermodynamics and conservation principles, and we move all of that up to the larger scale in a rigorous mathematical fashion.”

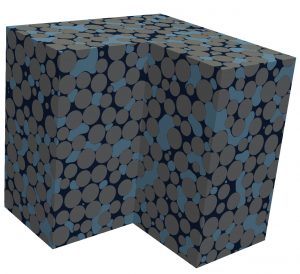

In this two fluid flow simulation model, gray spheres represent solid media, while the wetting phase fluid and non-wetting phase fluid are shown in dark and light blue, respectively.

“We want to evaluate this new theory by pulling it apart and looking at both individual mechanisms and at larger systems and the overall model,” he added. “The way that we do that is computation on a small scale. We routinely do simulations on lattices that can have up to billions of locations — in excess of a hundred billion lattice sites in some cases. That means we can accurately resolve the physics at a refined scale for systems that are sufficiently large to satisfy our desire to evaluate and validate these models.”

Miller said that the Summit supercomputer provides a unique resource that enables researchers to perform highly resolved microscale simulations. Recently, Miller and his team were awarded another 340,000 node hours on Summit through the 2020 INCITE program.

“While we have the theory worked out for how we can model these systems at a larger scale, we are working through INCITE to evaluate and validate that theory and ultimately reduce it to a routine practice that benefits society,” Miller said.

UT-Battelle LLC manages Oak Ridge National Laboratory for the DOE’s Office of Science, the single largest supporter of basic research in the physical sciences in the United States. The Office of Science is working to address some of the most pressing challenges of modern times. For more information, visit https://energy.gov/science.

This story was first posted by Oak Ridge National Laboratory.

Contact the UNC Gillings School of Global Public Health communications team at sphcomm@unc.edu.